Agentic Workflows vs. Autonomous Workflows: What's the Difference and Why It Matters

The terms "agentic workflows" and "autonomous workflows" are often used interchangeably. They shouldn't be.

The distinction isn't semantic. It reflects two fundamentally different architectures — with different risk profiles, different failure modes, and different places in a real product or team. Getting clear on what each one means is the first step toward deploying either one correctly.

What Is an Agentic Workflow?

An agentic workflow is one where an AI agent takes a sequence of actions to accomplish a goal — reading files, calling tools, making decisions, producing outputs — rather than generating a single response to a single prompt.

The defining characteristic is multi-step reasoning with tool use. The agent doesn't just answer a question. It does work:

- → It reads the relevant files before suggesting a change

- → It runs a test suite after making that change

- → It drafts a follow-up message if the test fails

- → It marks the task complete when everything passes

This is qualitatively different from a chatbot conversation. The agent is operating in an environment, not just a dialogue. It has actions, state, and consequences.

Agentic workflows can be human-in-the-loop or autonomous. That's where the second term comes in.

What Is an Autonomous Workflow?

An autonomous workflow is one where the agent executes its sequence of actions without requiring human approval at intermediate steps.

Autonomy isn't binary — it exists on a spectrum:

- → Fully supervised: Every action requires human confirmation before it executes

- → Supervised with exceptions: The agent proceeds autonomously within defined boundaries; anything outside those boundaries triggers a human check

- → Mostly autonomous: The agent makes most decisions independently; humans review the final output

- → Fully autonomous: The agent runs end-to-end with no human checkpoints

The autonomous end of the spectrum is powerful. It's also where most real-world failures happen — not because agents are unreliable in general, but because full autonomy removes the feedback loops that catch errors before they compound.

The Relationship Between Them

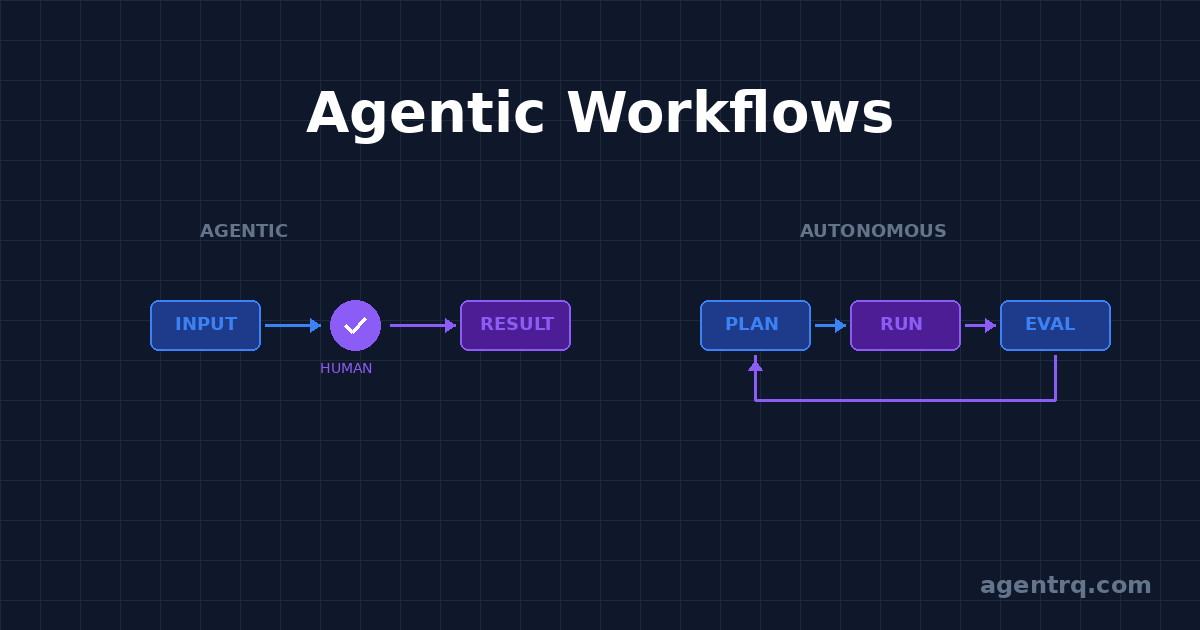

Every autonomous workflow is an agentic workflow. Not every agentic workflow is autonomous.

Agentic describes the *structure* of the workflow: multi-step, tool-using, goal-directed. Autonomous describes the *degree of human involvement*: how many checkpoints exist, and where.

A code review pipeline that reads a PR, identifies issues, drafts comments, and then waits for a human to approve before posting — that's an agentic workflow. It's not autonomous; it has a human gate before the consequential action.

The same pipeline, configured to post comments directly without approval — that's an autonomous workflow. Same agent, same tools, different human-in-the-loop configuration.

This matters because teams often think they need to choose between "using AI" and "staying in control." They don't. The agentic/autonomous distinction gives you a more precise set of dials.

Why the Distinction Matters in Practice

1. Failure modes are different

In a supervised agentic workflow, the human checkpoint is a circuit breaker. If the agent misunderstood the task or made a wrong assumption, you catch it before it propagates.

In an autonomous workflow, errors compound. The agent acts on its own interpretation, and subsequent steps build on that interpretation. By the time you see the output, the mistake may be several steps deep — harder to diagnose, harder to reverse.

This doesn't mean autonomous workflows are bad. It means they require higher confidence in the agent's task understanding, better-defined scope, and cleaner rollback paths.

2. Trust is built incrementally

Almost no workflow should start fully autonomous. The practical path is:

- Run the agentic workflow fully supervised — approve every action

- Identify which approvals are always rubber-stamped (low judgment, low risk)

- Automate those checkpoints

- Keep human gates on actions that require real judgment or have irreversible consequences

Teams that try to skip straight to autonomous execution usually end up pulling back after the first significant mistake. The incremental path is slower to set up but much more reliable in production.

3. Different tasks suit different autonomy levels

Not all work should be autonomous, even when you trust the agent.

Good candidates for high autonomy:

- → Mechanical, rule-based tasks (linting, formatting, link checking)

- → Low-stakes, easily reversible actions (drafting a document, generating a summary)

- → Well-scoped tasks with clear success criteria (run tests, open a PR)

Keep humans in the loop for:

- → Actions visible to others (sending messages, posting to external services)

- → Irreversible operations (deleting data, pushing to production)

- → Tasks requiring judgment calls that depend on context the agent might not have

The Human-in-the-Loop Architecture

The phrase "human in the loop" is often framed as a limitation — a concession to the fact that AI isn't good enough yet to run fully autonomously. That framing gets it backwards.

Human-in-the-loop is an architectural choice, not a fallback. The goal isn't to remove humans from the loop as quickly as possible. The goal is to place humans at the points in the loop where human judgment actually adds value — and let the agent handle everything else.

That means:

- → The agent does the research, the drafting, the mechanical execution

- → The human makes the calls that require context, stakes, or relationships the agent can't fully evaluate

- → The agent continues from there

In well-designed agentic workflows, the human isn't a bottleneck. They're a high-leverage decision point. The agent surfaces the right choice at the right moment. The human confirms or redirects. The work continues.

Agentic Workflows in Teams vs. Solo

The architecture looks different depending on scale.

Solo operators tend to run one or two workspaces, each focused on a domain. The human-in-the-loop is literally one person — you. Tasks queue up, you approve from your phone, work ships. The agentic workflow functions as a force multiplier: one person with well-configured agents can cover ground that previously required a team.

Small teams can assign agents to specific domains — one for marketing, one for infrastructure, one for customer-facing content — each with its own mission context and approval gates. The agents work in parallel. Humans are notified when their specific judgment is needed.

Larger organizations need to think harder about scope isolation. An agent that touches one system shouldn't be able to affect another without an explicit handoff. Agentic workflows at scale require the same kind of boundary thinking as distributed systems.

What Makes an Agentic Workflow Actually Work

The architecture matters less than the configuration. An agentic workflow lives or dies on:

Clear mission scope. The agent needs to know what it's responsible for and what's out of scope. Ambiguous scope produces ambiguous work — or worse, confident work in the wrong direction.

Defined approval gates. Every workflow should have explicit points where human review is required. Not because the agent will fail, but because those are the moments where your judgment adds the most value.

Workspace context. An agent that knows the project's history, conventions, and decisions produces fundamentally better output than one starting cold. The quality gap between a context-aware agentic workflow and a zero-context one is larger than most people expect.

Clean handoff points. When the agent finishes a step and needs input, the handoff should be frictionless. A reply from your phone should be enough to unblock it. If the handoff requires a multi-paragraph explanation, the workflow is poorly scoped.

Where This Is Going

The trajectory of agentic and autonomous workflows is toward more capable agents operating in longer-horizon tasks — handling hours or days of work rather than minutes. This makes the architecture questions more important, not less.

As task horizons extend, the cost of a wrong assumption at step one grows dramatically. A misunderstanding that costs you 30 seconds in a short task costs you hours in a long one. The human-in-the-loop checkpoint at the beginning of a long task is worth more, not less, than the checkpoint in a short one.

The teams that will get the most out of agentic AI aren't the ones that automate the human out of the loop as fast as possible. They're the ones that build workflows where human judgment is applied precisely — at the right moments, on the right decisions — while the agent handles everything in between.

That's the difference between autonomous for autonomy's sake and agentic for results.